This LLMrefs review guide is the right place to start if you are trying to figure out whether you actually need a dedicated tool for AI search visibility in 2026.

LLMrefs is built to show how often your brand is mentioned, cited, and ranked in AI assistant responses, including ChatGPT, Google AI Overviews, Perplexity, Gemini, and Claude, turning those AI responses into structured metrics you can use in real SEO and content workflows.

As AI-generated answers grab more attention than traditional blue links, the question shifts from “Do I rank in Google?” to “Do AI systems recommend me at all?” and this review walks through how LLMrefs tackles that problem, where it shines, and when it might be more tool than you actually need.

So, without any further ado, let’s get started.

Quick Takeaway From LLMrefs Review

- Best for: Teams that want keyword-based AI visibility metrics across major AI assistants.

- Pricing: One All-in-One plan at $79/month with 500 prompts and all engines included.

- Why SEOs like it: It plugs directly into existing keyword lists and reports AI rankings, share of voice, and citations in a way that fits normal SEO and reporting workflows

- Limitations: Newer tool, some learning curve, and no permanent free or ultra‑cheap starter tier.

- Alternative: Radarkit, with real-browser AI checks and a lower starting price from 29 USD/month.

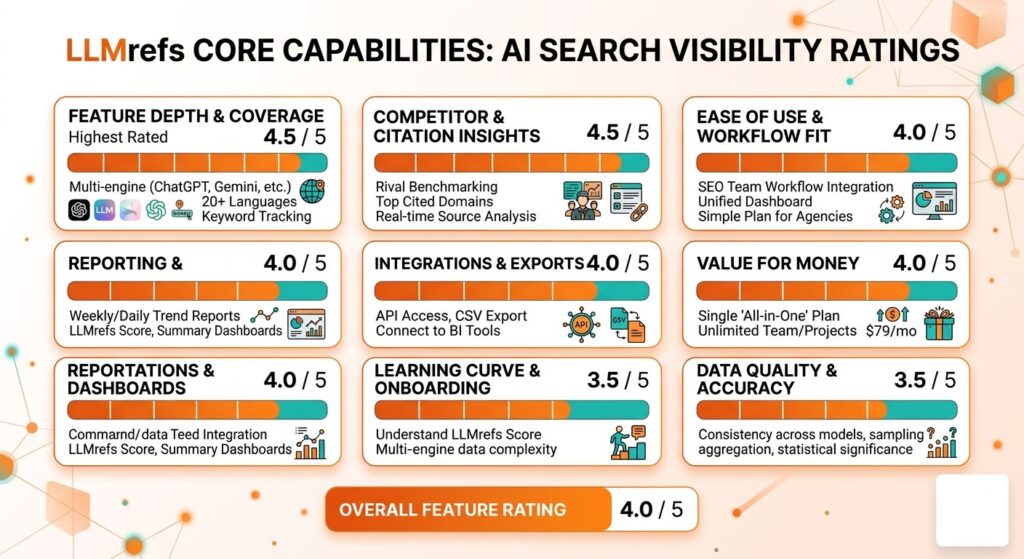

LLMrefs AI Tracker Capabilities Ratings

- Feature depth and coverage: 4.5/5

- Ease of use and workflow fit: 4.0/5

- Data quality and accuracy: 3.5/5

- Reporting and dashboards: 4.0/5

- Competitor and citation insights: 4.5/5

- Integrations and exports (API/CSV): 4.0/5

- Learning curve and onboarding: 3.5/5

- Value for money: 4.0/5

- Overall feature rating: 4.0/5

What is LLMrefs and Why Do You Need It?

LLMrefs is an AI-driven SEO and generative search analytics platform built to enhance your brand’s visibility across AI search engines and AI answer engines such as ChatGPT, Google AI Overviews, Perplexity, Gemini, Claude, and Grok.

Instead of tracking classic blue-link rankings in Google, it measures where, how often, and how prominently your brand, pages, and keywords appear inside AI-generated answers, along with which competitors are cited in your place.

This matters because AI assistants and chat-style interfaces are becoming a primary way users get information, often replacing or reducing traditional SERP clicks.

LLMrefs gives marketers actionable visibility metrics such as share of voice, citation frequency, rankings across models, and a proprietary LLMrefs Score so they can see how they perform in AI search and where they are losing ground to competitors.

By focusing on keyword-based tracking, competitor analysis, and AI visibility scores, LLMrefs helps you understand not just whether your brand is mentioned, but in what context, how consistently, and compared to whom across leading LLMs.

This makes LLMrefs more than a simple tracker; it is a strategic tool for adapting content, link-building, and GEO/AEO efforts to the unique dynamics of AI visibility and share of voice in AI-powered search.

Who LLMrefs AI Search Visibility is for?

LLMrefs is built for teams that care about how they show up inside AI answers, not just classic Google rankings.

It is especially useful wherever brand visibility, demand generation, or SEO strategy increasingly depend on AI search and AI answer engines.

Brands and In‑House Marketing Teams

These teams use LLMrefs to track how often their brand and key products appear across AI assistants like ChatGPT, Google AI Overviews, Perplexity, Gemini, Claude, and Grok.

They rely on their keyword-based tracking, share‑of‑voice metrics, and citation data to justify investment in AI SEO and to shape content and link-building around the topics where AI engines currently favor competitors.

Agencies and SEO Consultants

Agencies and SEO specialists use LLMrefs to report AI search visibility and share of voice for multiple clients in a structured way.

Unlimited users and projects, exports, and API access make it fit naturally into client reporting, GEO/AEO audits, and ongoing optimization retainers.

B2B Growth, Product, and Analytics Teams

Growth and analytics teams at B2B and SaaS companies use LLMrefs to connect AI search presence with awareness and pipeline, especially where buying journeys now start in AI tools instead of classic SERPs.

They benchmark against competitors on core categories and problem keywords to see whether AI assistants are recommending them or sending demand elsewhere.

Brand and Reputation Owners

Brand managers and communications teams use LLMrefs as a next‑generation brand monitoring layer focused on AI answer engines.

It helps them see which sources AI systems cite when talking about their brand, where they are omitted, and which content or communities (for example, specific blogs or Reddit threads) they need to influence to earn more positive visibility.

Who Gets the Most Value

LLMrefs is most valuable for brands, agencies, and marketing teams that already invest in SEO/content and now need clear, repeatable metrics for AI search visibility.

If your role involves brand visibility, SEO strategy, or reputation management in a world where AI systems increasingly shape what people see and trust, LLMrefs can be a worthwhile, strategically important addition to your stack.

LLMrefs Core Features That Actually Matter for AI Visibility

Here are the useful core features we found while writing the LLMrefs Review.

Here is a fuller, usefulness-focused expansion for each feature, staying consistent with LLMrefs’ own positioning and docs.

Keyword-Based AI Visibility Tracking

LLMrefs lets you track AI visibility by keywords, not by manually crafted prompts, which means you can plug your existing SEO keyword sets straight into AI search tracking.

This removes guesswork around “what to ask” each model, because the platform automatically handles prompt generation, sampling, and aggregation for those keywords at scale.

By doing this across ChatGPT, Google AI Overviews/AI Mode, Perplexity, Gemini, Claude, Grok, and more, and supporting 50+ countries and 20+ languages, you get a single, consistent view of how visible your brand is for each important query in the global AI search landscape.

That is especially useful for international brands or agencies that need to understand AI visibility by market without running endless manual tests.

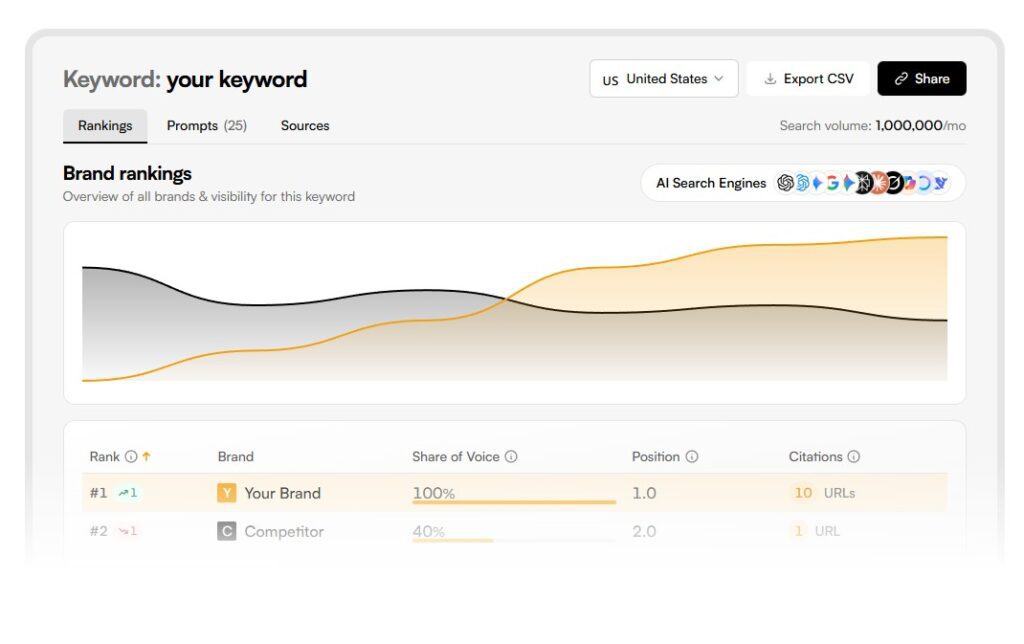

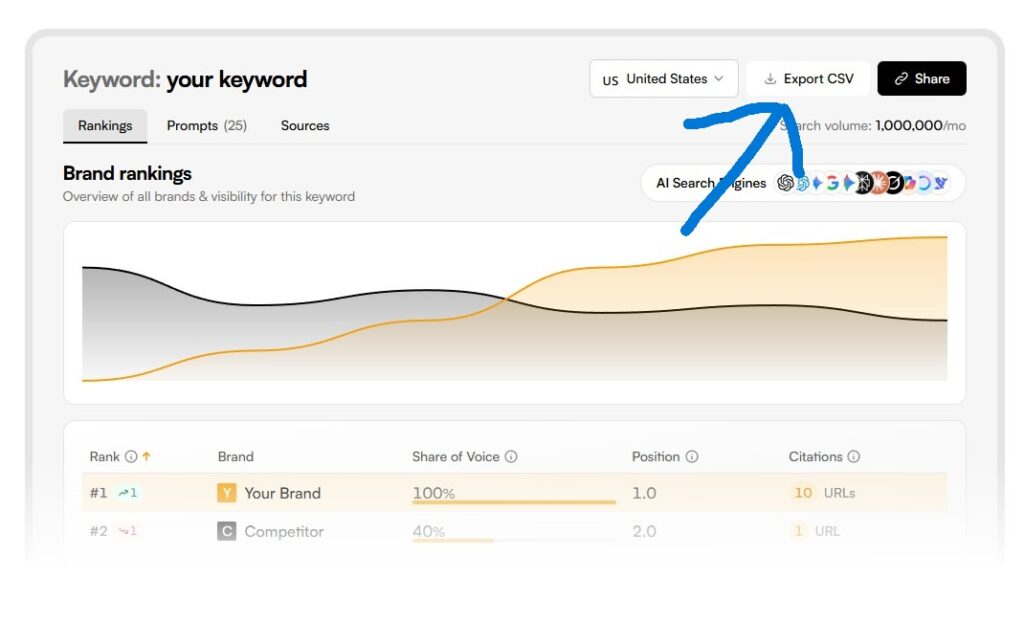

Share of Voice, Rankings, and LLMrefs Score

For every tracked keyword, LLMrefs calculates where and how often your brand appears in AI answers, then rolls this into rankings, share of voice, and a proprietary LLMrefs Score (LS).

The LS factors in ranking position, frequency of mentions, and consistency across models and prompt variations, giving you a single indicator of “how strong” your AI visibility really is.

This is useful because traditional SEO metrics (like Google rank in one country) no longer tell you if AI assistants are actually recommending you.

With share of voice and LS, you can quickly see whether your AI presence is improving, which campaigns move the needle, and where competitors are outscoring you in AI search.

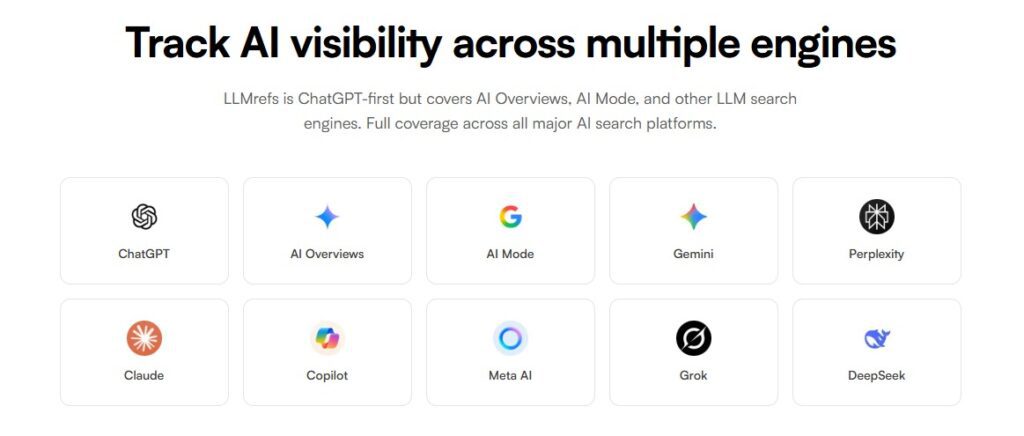

AI Visibility Across Multiple Engines

LLMrefs tracks how visible your brand and keywords are across many leading AI search engines and assistants in one place.

It runs standardized, statistically significant queries across models like ChatGPT, Google AI Overviews/AI Mode, Perplexity, Gemini, Claude, Grok, Copilot, Meta AI, and DeepSeek, then aggregates results so you can see your overall AI presence instead of checking each platform manually

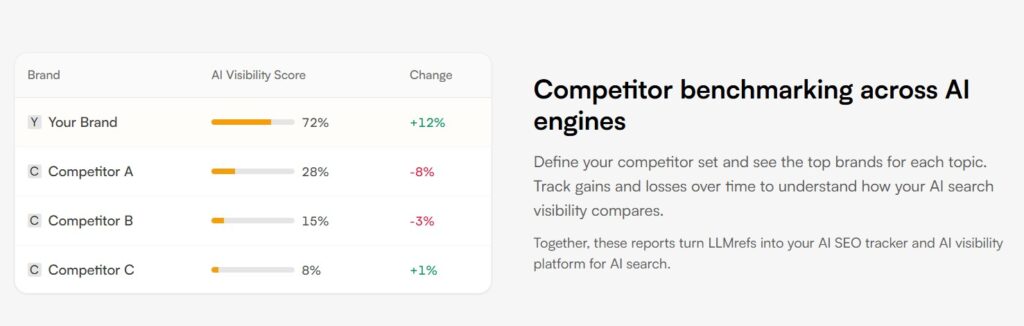

Competitor Benchmarking Across AI Engines

LLMrefs doesn’t just show your numbers in isolation, it benchmarks your brand against named competitors on the same keywords and AI engines.

You can see which rival brands are being cited more often, who dominates “best tools” and “alternatives” prompts, and how your LS and share of voice compare over time.

This makes AI SEO much more actionable. Instead of only knowing that you “appear” somewhere in AI answers, you can identify: topics where you’re already winning, topics where a particular competitor owns most recommendations, and new opportunity areas where nobody is yet strongly represented.

That directly informs content roadmaps, PR priorities, and link-building targets.

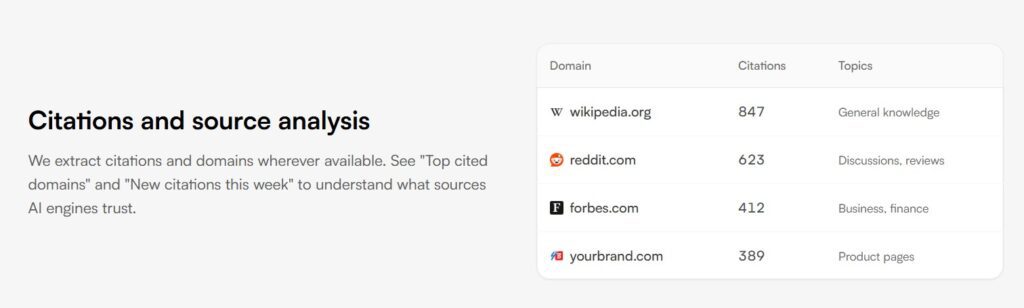

Citation and Source Analysis

A key LLMrefs feature is showing which domains and URLs AI models actually cite when answering questions around your keywords.

You get lists of top-cited domains, new citations over a period, and page-level views of which URLs are being referenced for specific topics.

This is powerful because it reveals the real “trust graph” behind AI answers.

Instead of guessing which links and publications matter, you can see which sites models lean on today and target those places with content, digital PR, partnerships, or guest posts.

It also highlights where competitors are getting cited, so you can plan how to displace or join them in those same ecosystems.

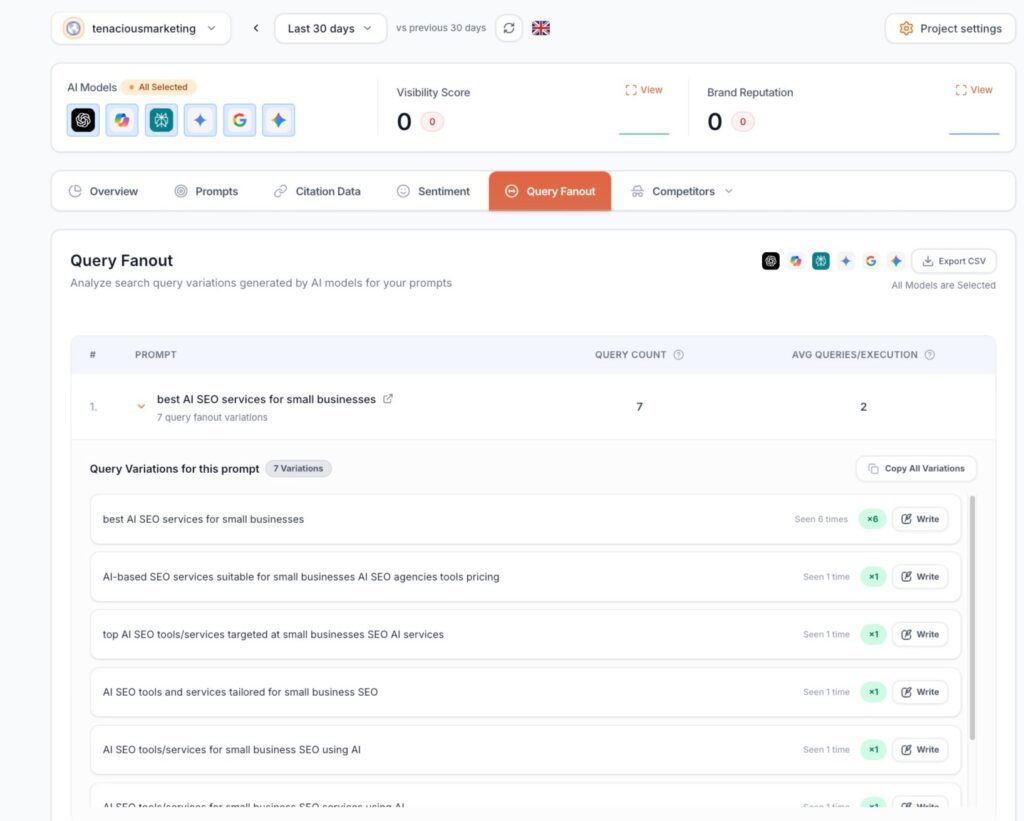

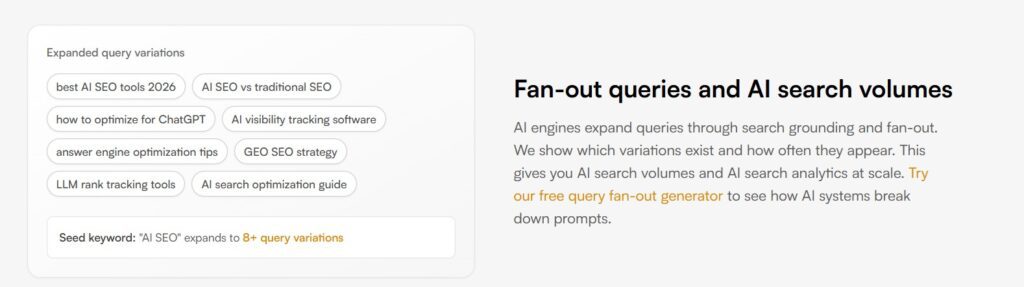

Auto-Generated Prompts and Fan-Out Queries

LLMrefs automatically generates prompts from real user conversations and common query patterns for your keywords, such as “best X tools,” “X alternatives,” comparisons, and how‑to queries.

It then runs these fan‑out prompts across AI engines until it reaches statistically significant samples, aggregating the results back to each keyword.

The usefulness here is twofold: you save huge amounts of manual prompt-writing time, and you get a more realistic, user-like picture of how AI tools talk about your category.

You can discover which query formulations drive the most exposure, where you are absent in high-intent prompts, and how AI assistants phrase questions you might want to target with content and FAQs.

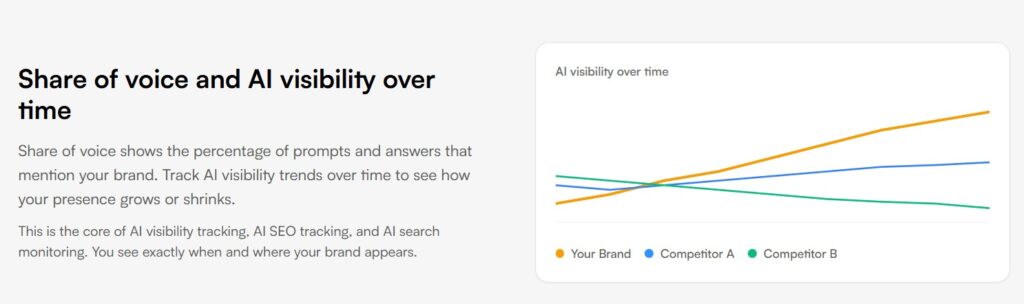

Real-Time Tracking, Dashboards, and Trend Reports

While writing this LLMrefs Review, we found that LLMrefs offers live tracking plus scheduled updates (with higher-frequency updates on bigger plans), presented through dashboards that summarize visibility, LS, share of voice, and citations.

Weekly or daily trend reports show how changes in content, links, or market activity are affecting your AI search presence over time.

This is useful for moving AI visibility out of “one‑off experiments” and into an actual KPI.

Teams can monitor whether a new product launch, content campaign, or technical fix led to better coverage in AI answers, and they can catch drops quickly if a competitor ships something that displaces them.

It also gives you reporting artifacts you can show to leadership or clients.

Data Exports, API, and Integration

LLMrefs supports CSV exports and offers an API so you can pipe AI visibility data into BI tools, custom dashboards, or broader marketing reporting stacks.

You can combine LS, share of voice, and citation metrics with web analytics, CRM, and ad data to understand how AI search visibility correlates with traffic, leads, or revenue.

The usefulness here is that AI visibility stops being an isolated metric. You can, for example, compare LS against branded organic search, measure whether improved AI coverage precedes branded search growth, or build alerts in your existing systems when your AI share of voice falls below a threshold.

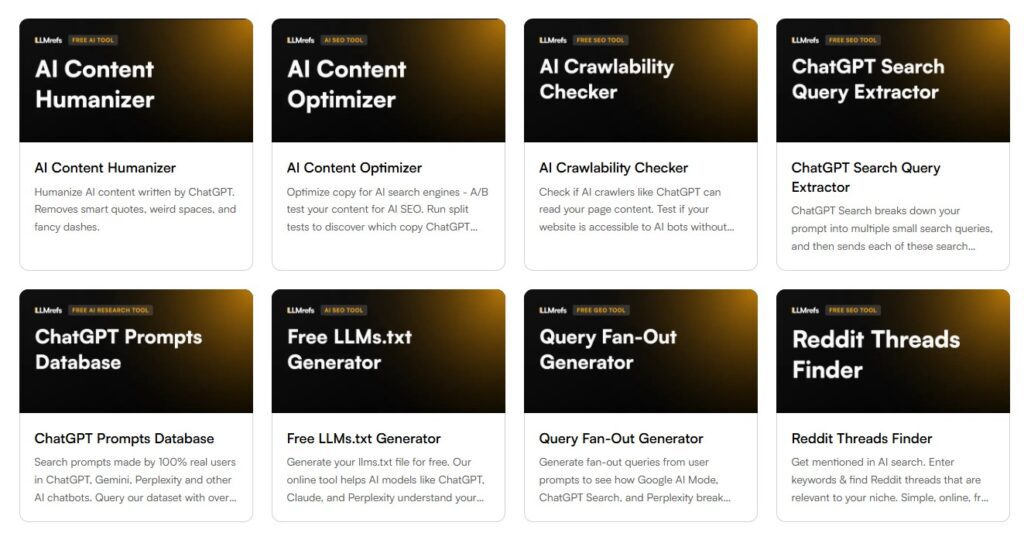

Additional AI SEO Utilities

Alongside its core tracking, LLMrefs bundles several free AI SEO tools designed to help you act on AI visibility insights, not just observe them. These utilities cover content tuning, technical readiness for AI crawlers, and research into the sources and queries AI systems rely on.

The included tools are:

- AI Content Humanizer

- AI Content Optimizer (AI Content A/B Tester)

- AI Crawlability Checker

- ChatGPT Search Query Extractor (ChatGPT Query Extractor)

- ChatGPT Prompts Database

- Free LLMs.txt Generator (LLMs.txt Generator)

- Query Fan-Out Generator

- Reddit Threads Finder

Together, these tools help you humanize and test AI content, ensure your site is crawlable and correctly signposted for LLMs, mine real ChatGPT queries, generate robust prompt sets, and discover Reddit discussions that often feed into AI training and answer patterns.

LLMrefs Review: Plan and Pricing

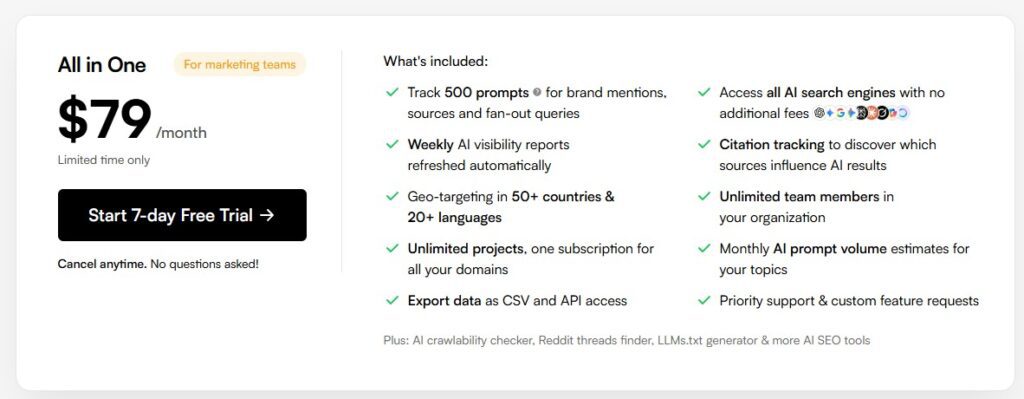

The most important part about LLMrefs Review is the pricing. LLMrefs currently offers a single “All in One” plan aimed at marketing teams, priced at $79 per month on a limited-time basis.

The plan includes tracking for 500 prompts covering brand mentions, sources, and fan‑out queries, with access to all supported AI search engines at no extra cost.

Within this plan, you also get weekly AI visibility reports, citation tracking to see which sources influence AI results, and geo‑targeting in 50+ countries and 20+ languages, plus unlimited team members and unlimited projects under one subscription for all your domains.

The package includes monthly AI prompt volume estimates, CSV export and API access, priority support, and bundled access to extra AI SEO tools such as the AI Crawlability Checker, Reddit Threads Finder, LLMs.txt Generator, and more.

LLMrefs Review: Pros and Cons

Like any AI SEO tool, in this LLMrefs Review, we will look into its strengths and weaknesses.

Pros

- Purpose-built for AI search visibility and citations: LLMrefs is designed specifically to track keyword-based visibility, rankings, and citations across AI engines like ChatGPT, Google AI Overviews/AI Mode, Perplexity, Gemini, Claude, Grok, Copilot, and others, rather than classic SERP ranks only.

- Strong multi-engine coverage from one dashboard: You can see how your brand and competitors perform for the same keywords across many AI assistants in one place, with geo-targeting for 50+ countries and 20+ languages. This saves hours of manual testing and gives a realistic, cross-engine picture of your AI presence.

- Clear AI share of voice and LLMrefs Score: The platform rolls rankings, frequency of mentions, and coverage across prompts into share-of-voice metrics and a proprietary LLMrefs Score, making it easier to benchmark topics and brands instead of drowning in raw prompt-by-prompt data.

- Useful competitor and citation intelligence: LLMrefs highlights which competitors appear alongside (or instead of) you and which domains/URLs AI engines cite for your topics, helping you target specific content, PR, and backlink opportunities that actually influence AI answers.

- Good fit for agencies and teams with multiple brands: With a single “All in One” plan including unlimited team members and unlimited projects, it is attractive for agencies and in-house groups managing several domains who need AI visibility reports as a recurring deliverable.

- Bundled AI SEO utilities add execution power: Free tools like AI Content Humanizer, AI Content Optimizer, AI Crawlability Checker, ChatGPT Query Extractor, ChatGPT Prompts Database, LLMs.txt Generator, Query Fan-Out Generator, and Reddit Threads Finder help move from insight to action without extra subscriptions.

- Pricing is simple and transparent at the entry level: LLMrefs has a single All-in-One plan at 79 USD per month, including 500 prompts and full engine coverage, which is competitive versus some credit-based or enterprise-only AI visibility tools.

Cons(Limitations)

- Newer platform with limited historical depth: LLMrefs launched in 2025, so it naturally has less long-term historical trend data and a shorter track record than older SEO suites, which matters if you want multi‑year AI visibility histories or very mature ecosystems.

- No true free tier: There is no permanent free plan; you need to pay to use it beyond any trials, which can be a barrier for small teams that want to experiment slowly or compare multiple AI visibility tools side by side.

- Feature depth comes with a learning curve: Reviews note that while the feature set is powerful, understanding LLMrefs Score, fan‑out queries, and multi-engine data can take time, and less-technical users may initially find the dashboards and options overwhelming.

- Credit/scale limits for larger programs: Even though the public All in One plan is simple, third-party reviews point out that keyword/prompt limits and refresh frequencies can feel tight at scale, and higher tiers or custom usage can increase costs without fully transparent public pricing.

- No enterprise-grade compliance yet: As of 2026, LLMrefs does not advertise SOC 2 or similar enterprise compliance, which may be a blocker for large enterprises with strict security and procurement requirements.

LLMrefs Customer Review and Constructive Feedback

LLMrefs review are generally positive from AI‑focused marketers, SEOs, and agencies, who say it solves a real gap by showing how brands appear inside AI answers, not just in Google rankings.

Users highlight that keyword-based tracking, auto‑generated prompts, and broad coverage across engines like ChatGPT, Google AI Overviews, Perplexity, and Gemini make the data easy to use in real SEO and content workflows.

Many also appreciate the simple all-in-one plan at $79 per month, which includes unlimited users, unlimited projects, and bundled AI SEO tools, making it attractive for teams managing multiple brands.

Constructive feedback and LLMrefs review focus on maturity and accessibility rather than core values.

Because LLMrefs is still a relatively new product, reviewers note that it offers less long-term historical data than older SEO platforms and that understanding concepts like AI share of voice and the LLMrefs Score can involve a learning curve for non‑specialists.

Some users also feel that the absence of a permanent free tier or cheaper starter plan makes it harder for very small teams or solo creators to adopt the tool long term, even though the pricing is seen as fair for agencies and established marketing teams.

LLMrefs Alternative To Consider

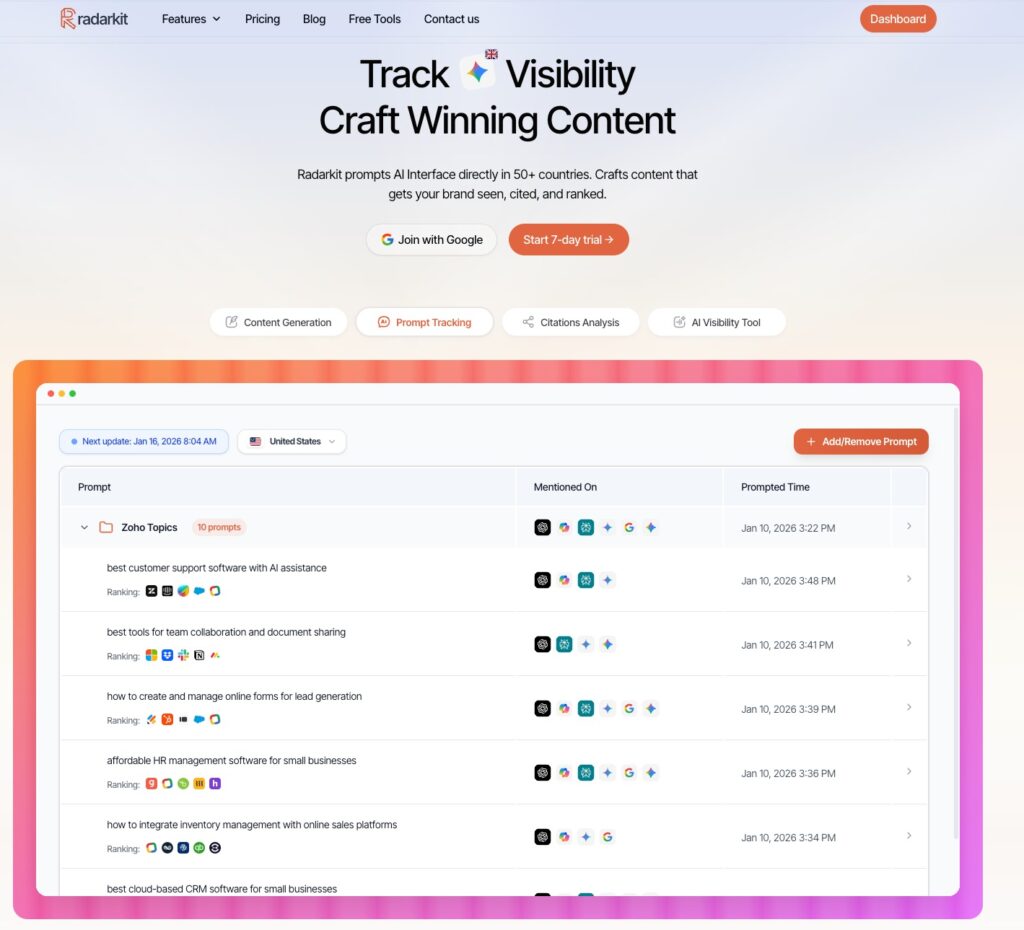

While our LLMrefs Review shows LLMrefs as a strong option for AI search visibility, Radarkit is a solid alternative for teams that want AI visibility data tightly tied to what real users see and how that traffic performs.

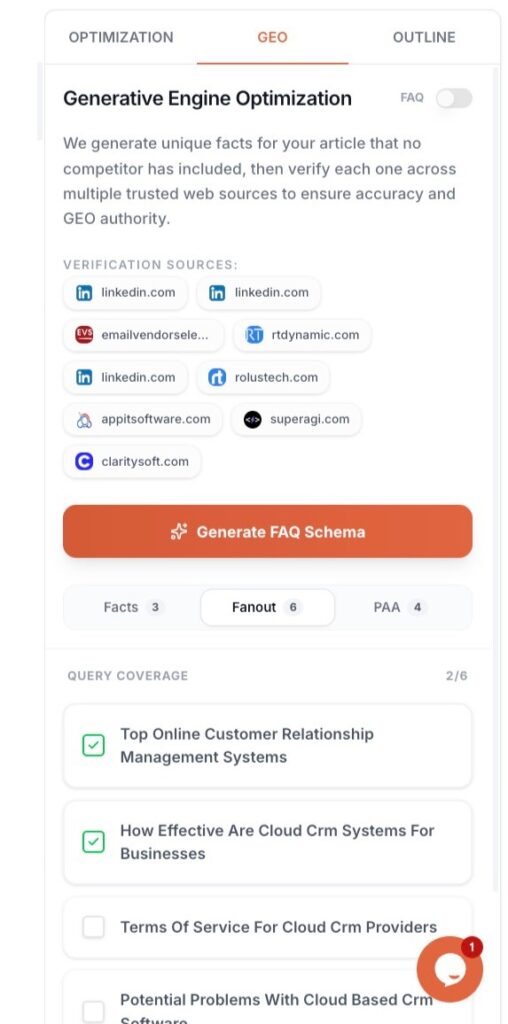

It tracks how your brand and competitors appear for chosen keywords directly inside live AI assistants such as ChatGPT, Perplexity, Gemini, Copilot, and Google AI Mode, using real-browser prompts rather than just APIs.

This lets you see positions, share of voice, and which sources are cited in actual AI answers, then connect that visibility to sessions and conversions via GA4 so you can judge business impact, not just mentions.

Radarkit also highlights pages that AI frequently cites, helping you identify link and content opportunities that can improve both AI visibility and traditional SEO.

Its public plans start at $29 per month for Lite, with higher tiers adding more prompts, projects, and deeper analytics, making it attractive for smaller teams and agencies that want a more budget-friendly entry point than many competing AI visibility tools.

Radarkit’s Unbeatable Features

Query Fanouts

Enter a prompt like “affordable CRM for startups” into Radarkit, and you can see the additional queries and resources an LLM explores under the hood—the fanouts behind the main answer.

That insight helps you design content that aligns with the research path the model is already following, increasing your chances of being surfaced and cited.

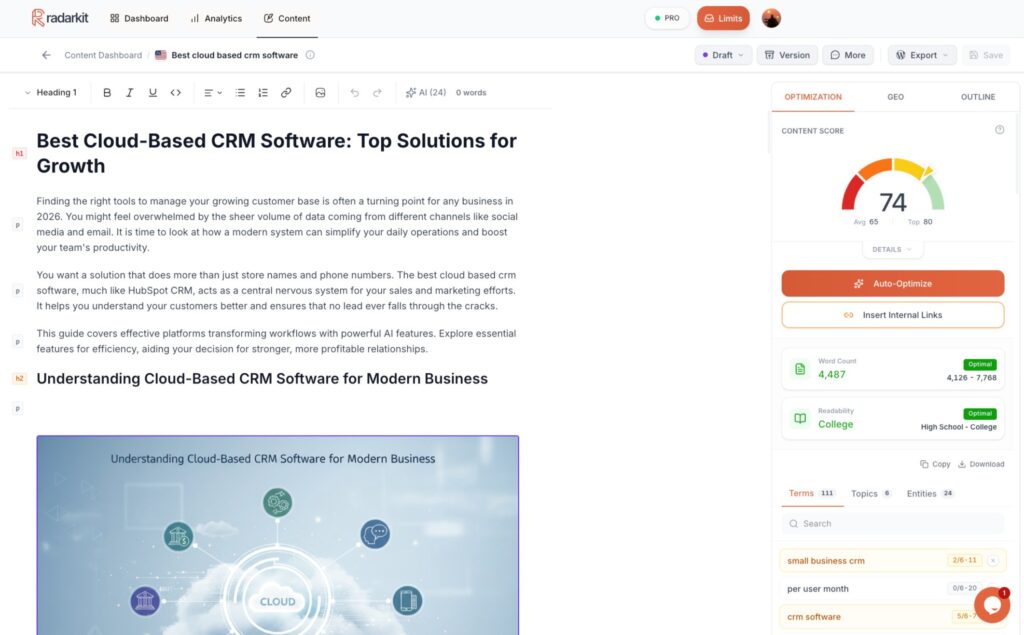

GEO Writer

GEO Writer uses NLP and citation data drawn from competing pages to generate an article that includes the right facts, PAAs, and semantic signals to perform well in both the SERP and LLM-generated responses.

UI Based Tracking from 50+ Countries

Radarkit routes checks through residential IPs in many countries, so you can see how AI assistants respond from different locations and understand how visibility shifts across markets.

FAQs: LLMrefs Review

How is LLMrefs different from a normal SEO rank tracker?

LLMrefs focuses on AI search visibility rather than classic blue-link rankings, showing how often your brand is mentioned and cited inside answers from tools like ChatGPT, Gemini, Perplexity, Claude, and Google AI Overviews, instead of just where you rank in Google’s organic results.

What kind of data does LLMrefs give me about my brand?

It aggregates AI responses into metrics such as brand mentions, citation frequency to your pages, AI rankings, and share of voice versus competitors, so you can see whether AI systems use you as a trusted source and how that changes over time.

Who gets the most value from using LLMrefs?

Teams that already invest in SEO and content (especially B2B, SaaS, and agencies) get the most value, because they can plug in their keyword lists and use LLMrefs data to refine content, link-building, and positioning specifically for AI answer engines.

Can LLMrefs replace my existing SEO tools?

No, LLMrefs is a complement rather than a replacement: you still need traditional SEO tools for keyword research, technical fixes, and classic SERP rankings, while LLMrefs adds a new GEO/AEO layer that shows how you perform inside AI-generated answers.

How do I know if my brand is “ready” for an AI visibility tracker like LLMrefs?

You’re usually ready when AI assistants already influence your buyers, and you want consistent, multi-engine data instead of manual copy-paste tests; if AI search is still a minor channel or your budget is tight, most guides suggest starting with fundamentals and lighter checks before committing to a dedicated tracker.

Final Verdict: Is LLMrefs Worth The Investment?

LLMrefs is generally worth the investment if AI search visibility is already a meaningful part of how you think about SEO, content, and brand awareness, rather than just a side experiment.

It gives you a structured, keyword-based view of how often you and your competitors appear, rank, and get cited across multiple AI assistants, translating messy AI answers into concrete metrics like rankings, share of voice, and citation data that can feed directly into content roadmaps, link-building, and reporting.

At $79 per month for the current All-in-One plan, you also get broad engine coverage, geo-tracking, competitor benchmarking, and a bundle of AI SEO utilities (like crawlability checks, llms.txt generation, and content tools), which independent reviewers repeatedly describe as strong value for serious in-house teams and agencies.

Where LLMrefs is less compelling is at the very early or very budget-constrained end of the market.

If you are just starting to explore AI visibility, only need occasional spot checks, or have not yet proven that AI assistants materially influence your audience, then the combination of learning curve and ongoing subscription cost can feel heavy, and lighter or cheaper tools might be a better starting point until AI becomes a proven channel.

It also remains more focused than a classic all-in-one SEO suite, so you will usually pair it with existing SEO tools rather than replace them, which is another reason it fits best where teams already have a mature search stack and see AI visibility as the “next layer” rather than a nice-to-have.

In short, LLMrefs Review shows that it tends to be worth it for B2B, SaaS, and agency teams ready to treat AI visibility as a real KPI they monitor and act on every month.

For everyone else, it is more of a “next step” once AI-driven search clearly matters to their brand and budget.